AWS Deepracer — How to train a model in 15 minutes

In a previous article I described how I got into DeepRacer and what it is. This article is about the technical parts: How the scoring function was designed and why it works.

What most people did

Almost all scoring functions that I heard of are mostly scoring based on where the car currently is. The simplest approach is already provided as an example by AWS: just score high, if you are closer to the central line. More sophisticated approaches score high, if the car is close to a hand crafted more or less ideal line. E.g. a line that would be on the right before a left turn and on the left in the middle of the turn. The proximal policy optimization algorithm is then able to learn what good actions have been and which not.

What I did

My approach was different: I used the steering direction of the car (which is available as a parameter in the scoring function during training).

My scoring function works as follows:

Draw a circle with a fixed radius around the car.

Take the intersection of this circle with the central line.

Aim for this point. I.e. The score is highest if the steering direction is such that the front wheels point there. The more it deviates the lower the score.

I adjusted the radius of the circle depending on the track and how narrow the corners are. And that is it. This got me to the top 8 worldwide. Other than that I only needed to adjust one of the training parameters: I set the discount factor to 0.5 rather than the default of 0.999.

Why is this a good idea?

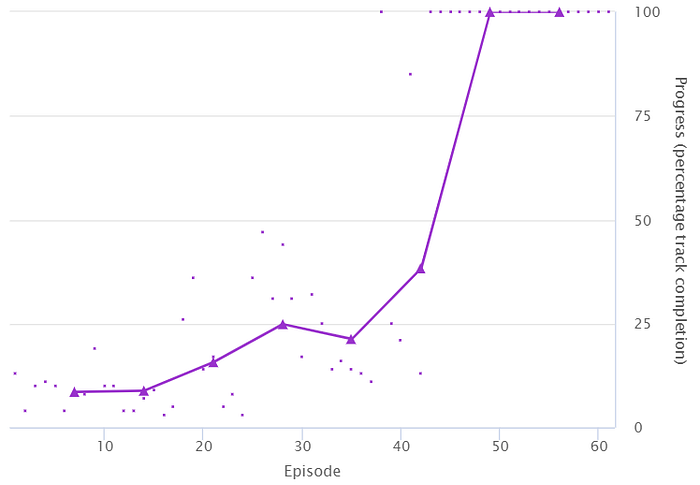

After about 15 minutes of training the car completes its first lap. One minute later it completes another lap and after this it can run 50 more without a single crash. Other approaches usually took many hours if not days to converge to a somewhat stable model. But Why?

Quick response leads to faster learning: Reinforcement learning is about rewarding good behavior. The clearer the connection between the behavior and the score is, the quicker it can learn. Imagine you try to train your dog something. You could do this by providing a reward every evening, say, by providing more or better food, if it behaved well. Though this does work in principle it will take pretty much forever. If you want to train some tricks, you pretty much have to provide a reward immediately after something was done well.

It is the same with the scoring function in DeepRacer: One could in principle exclusively provide a score for finishing a lap. The PPO-Algorithm would eventually work out how to steer. But it will only improve if it ever finishes a lap, and even then would it barely learn with each success. In fact doing slightly better by providing a constant score for not crashing already allows the algorithm to slowly (but visibly) learn. Of cause the scoring according to position of the car is much more direct. Even still it only provides some kind of average rating for the last couple of frames that lead the car to the place it is now. There may be a couple of bad steering commands in the past that were only compensated by other commands later on. Both would end up being encouraged and only eventually would good commands end up with a better statistic and hence get encouraged most often.

Pointing to the “right” direction is as direct as it gets. It immediately tells the learning algorithm, if this steering direction is good or not. There is no averaging over multiple frames needed. This is also the reason I reduced the discount factor: the scoring does by no means rate good actions in the past (in contrast to scoring according to position). I could probably have reduced the discount factor even further. I really did not have time to play around with it yet.

This direct scoring is the main reason this approach learns so fast.

Using local information makes learning easier: Ultimately the car has to drive without any knowledge of way points or where it is exactly. It can only work with the camera image and make a decision. This means that it is much easier to learn a scoring function that is only based on information that can be seen. I am using only information about the central line right in front of the car, which can usually be seen. A perfectly optimized ideal line would (at least in principle) also depend on parts of the track that can not even be seen at the time of making the decision. That does not mean that it is not possible to learn this more complicated path, just it is more difficult.

Cutting corners is good: This should not be too surprising. It is faster because the path gets shorter this way. It also reduces the risk of running out of the track. Finally this also makes it easier to keep the eyes on the road. If the car is on the right in a left turn, the camera mostly sees green and only little of the track, so it is harder to decide in which direction to steer. Increasing the radius in the scoring function described above leads to a direction that is cutting corners in a stronger way.

Isn’t this cheating?

Well it feels a bit like cheating at first. Right? After all there is an elaborate training algorithm that can figure out how to steer. An now we tell the car directly how it should steer using “complicated mathematics”.

That is true, but the challenge is not to learn what is a good direction to steer to given the way points and the coordinates of the car. The difficult part is to train a neuronal network in such a way that it can find this direction based on the camera image. It is only in the virtual environment that we can use the position of the car and the track because this information is so kindly provided by the virtual environment.

What providing scores based on position does is it puts the extra burden of learning how the steering direction affects the position on the neuronal network.

In summary, one should put as much knowledge of the problem into the scoring function to minimize the amount of information that the neuronal net needs to learn and to make the feedback for the learning as direct as possible.

Edit (Oct.2020): Now that there are obstacles or other cars on the track the approach I have taken no longer works. However, it could be extended by taking into account where the obstacles are when deciding for a steering direction. I am curious to see if anyone implements this approach or what else people come up with.

The scoring function and parameters used for training can be found here (leave me a star if you have a github account ;)

https://github.com/falktan/deepracer